Deep Learning & Neural Networks

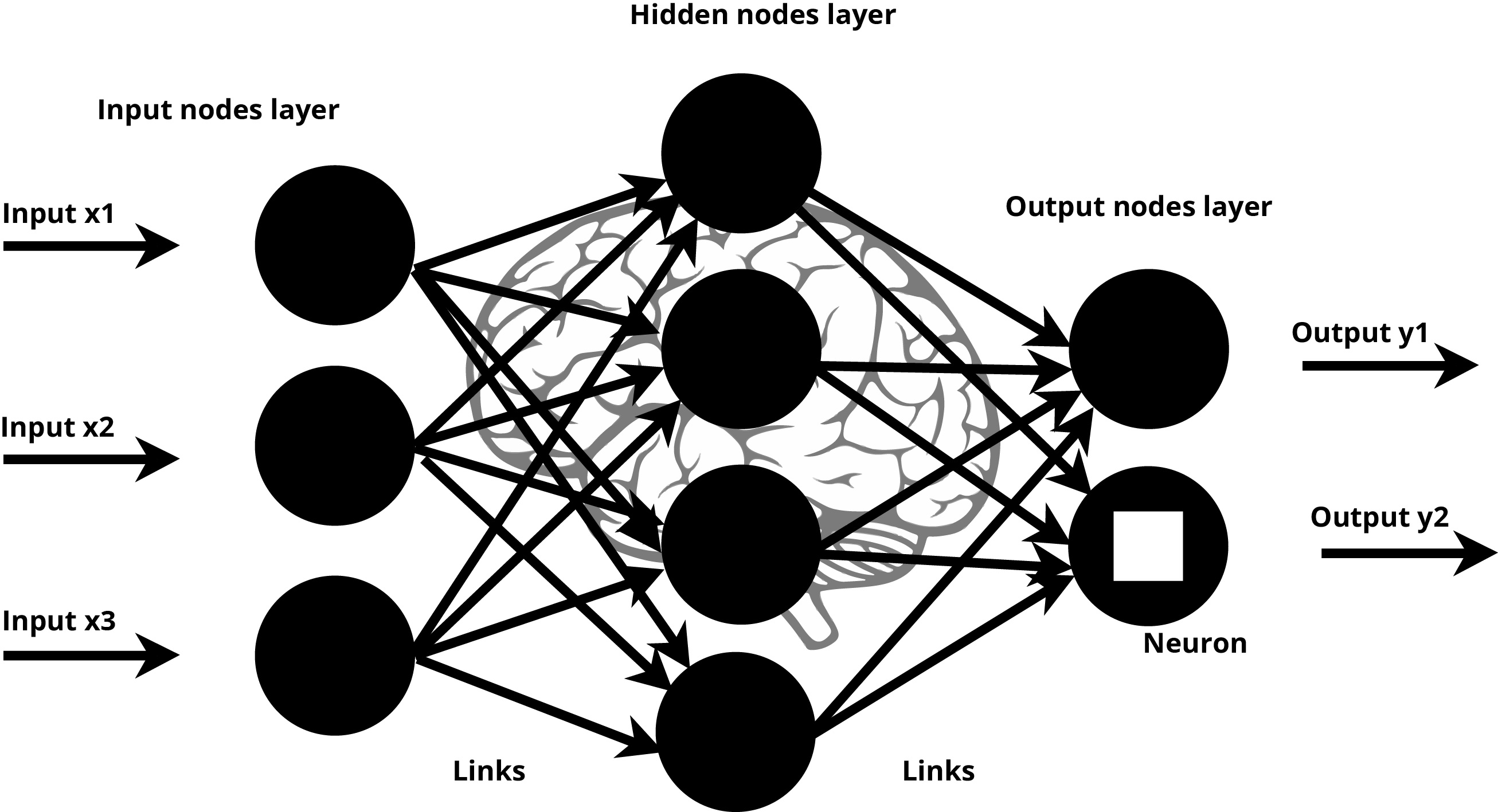

Visual Representation of a Neural Network

Deep Learning is a subfield of Machine Learning which makes high performing prediction models. Deep Learning uses Neural Network architecture with multiple hidden layers which is inspired by the structure of the human brain. In a human brain, the neurons form the fundamental building blocks of the brain and transmit electrical pulses throughout our nervous system, and the perceptrons receive a list of input signals and transform them into output signals.

Deep Learning is based on artificial neural networks and was built by experts to mimic the working of the human brain. Similar to how humans learn from experience, deep learning algorithms learn from iterative experience. Every time a network performs the task, it learns and adds the learnings of the approach it undertook to its memory. Over time, with increased practice it makes it possible for it to improve itself for a better, more accurate outcome.

There are two reasons which have caused Deep Learning to take off in the last few years:

The availability of large data sets have caused engineers to look for a scalable way to address the challenges and opportunities being presented. From facial recognition to speech recognition, the vast and unique datasets have meant that traditional labelling of a large and representative data set is no longer required.

Deep learning requires high-performance GPUs for computation. Manufacturers of GPUs like Nvidia and AMD have contributed to this to train deep learning algorithms in a time-efficient manner.

The availability of these high powered computing resources on a usage based approach (via the on demand cloud services) have made it easier for researchers, startups and established players to approach the Deep Learning space in a serious manner.

How do Deep Learning & Neural Networks Work?

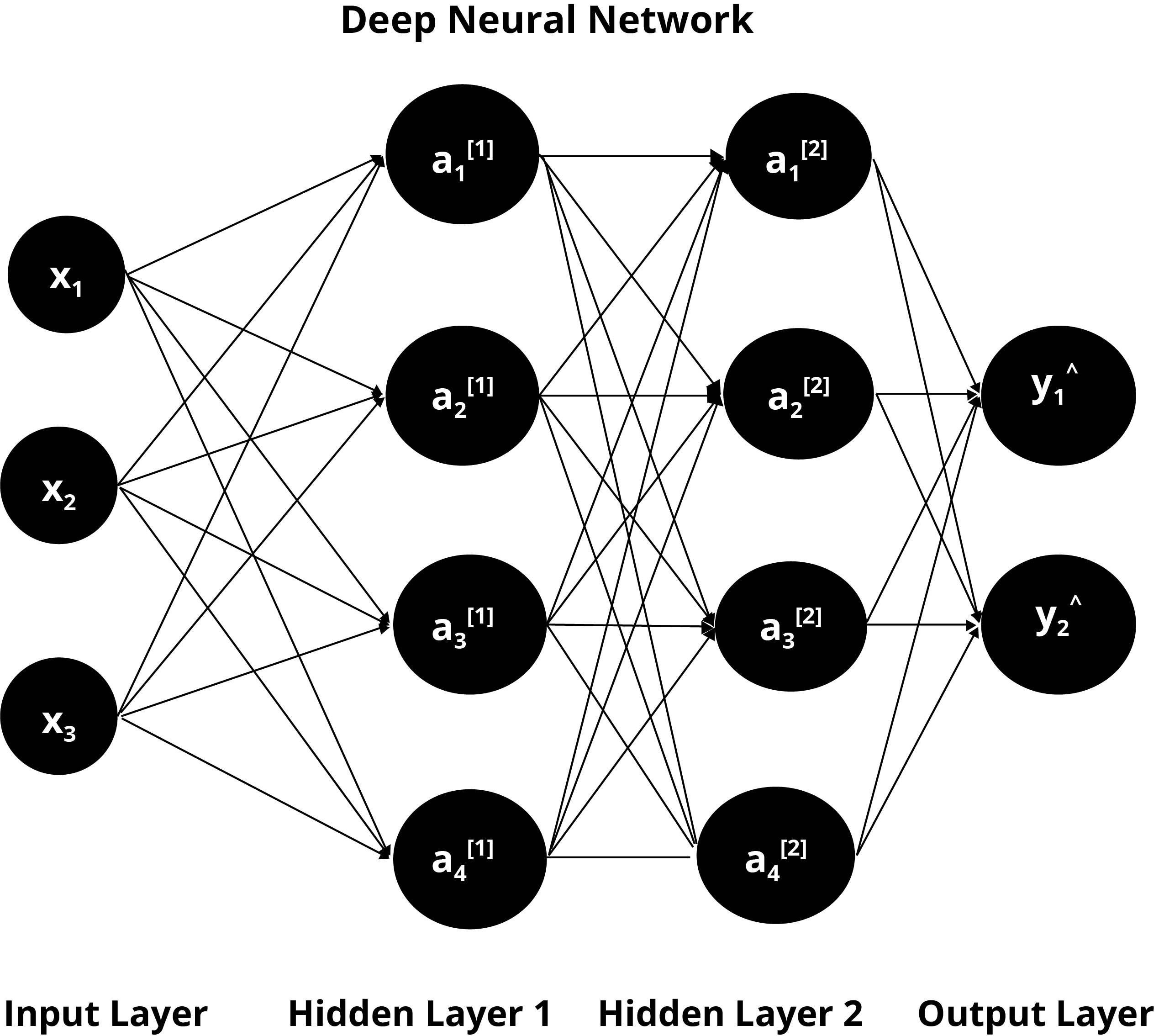

Deep Learning models learn by discovering intricate structures in data by building different models. These models are composed of multiple processing layers called hidden layers. We can add multiple hidden layers between input layers and output layers to generate high accuracy prediction models.

Deep Neural Networks (DNN) as an Artificial Neural Network (ANN)

Artificial neural networks consist of networks of neurons computationally designed to solve a specific problem using different layers.

A Deep Neural Network (DNN) is made up of layers of artificial neural networks (ANN). Each layer can have a specific purpose and for a complex problem, this is what necessitates the need for multiple layers.

Components of ANN (Artificial Neural Networks)

We will discuss how ANNs work by breaking it down into components and exploring them.

Nodes

Artificial neural networks consist of a collection of nodes called artificial neurons. Nodes are points in these networks where computation takes place. Just like the neurons in our brain receive a stimulus, process the information, and transmit signals to other neurons, these nodes work the same way.

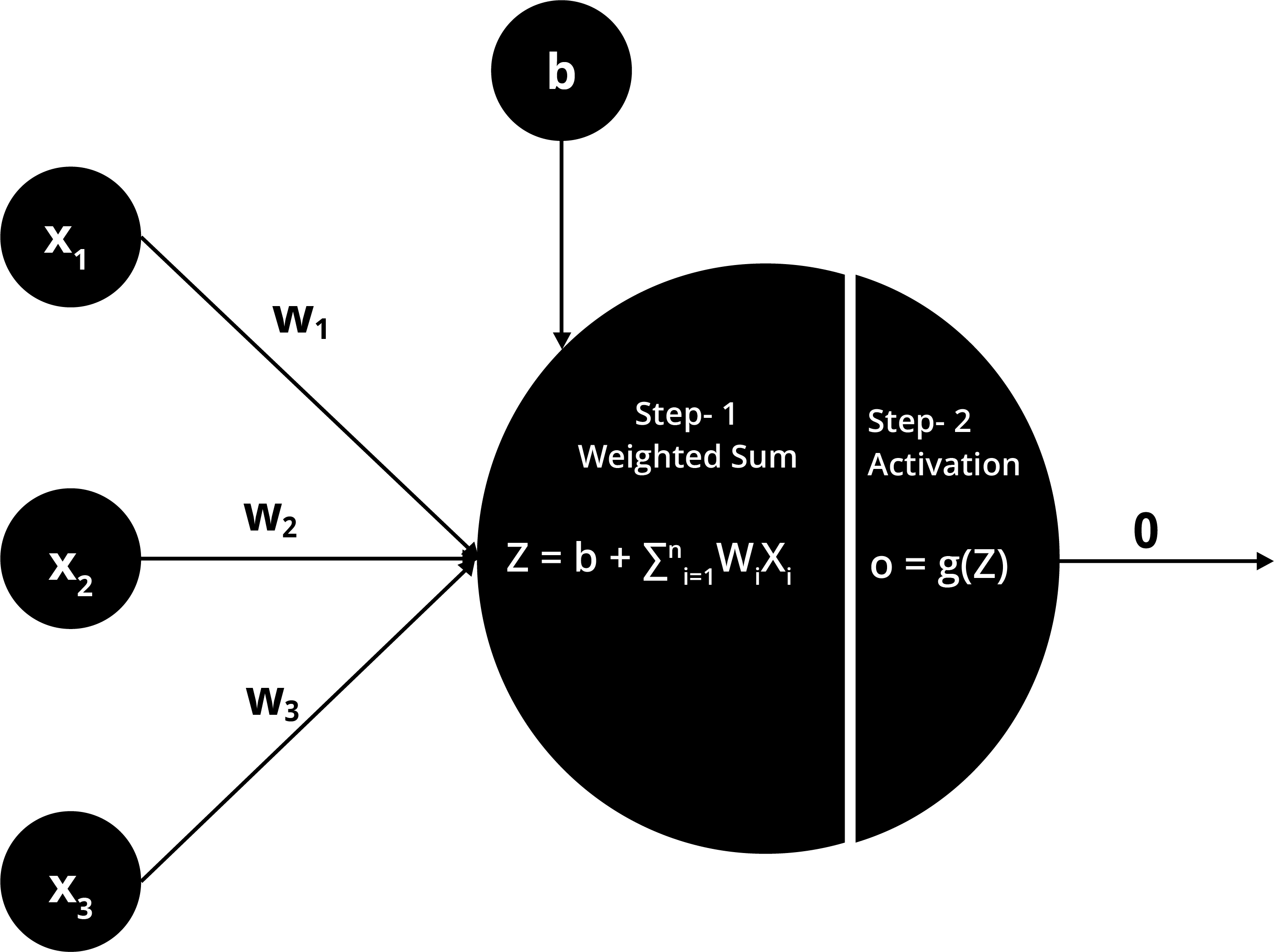

Weights

Each node in the artificial neural network is accompanied by weights that assign relative importance to the input. When the inputs are fed into the network, the weights or coefficients are multiplied by that input. This input-weight product is summed with a bias factor. It is then passed to the node’s activation function.

Bias

A Bias is a constant added to the model for managing error in prediction. Before starting the iteration, we assign some default value like 1, which changes with an iteration to reduce errors in prediction. You can consider this as an output when the input is zero.

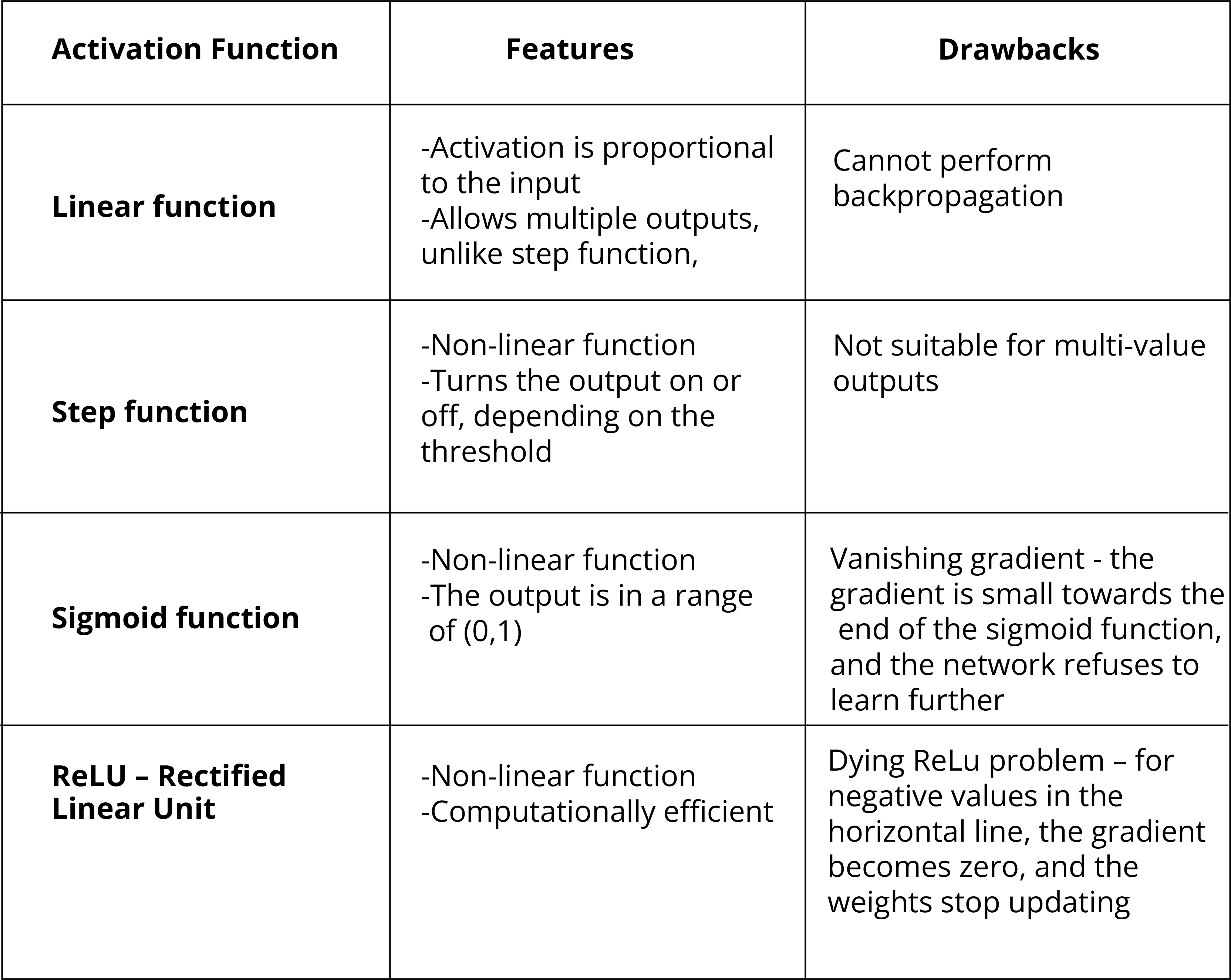

Activation Function

The activation function determines whether this input-weight product summed with the bias holds significant value to progress further in the network or not. If it does not, it is simply not “fired”. There are many types of activation functions that work for particular applications like the step function, linear function, sigmoid function, etc. We will examine their features along with their drawbacks in the comparison table below.

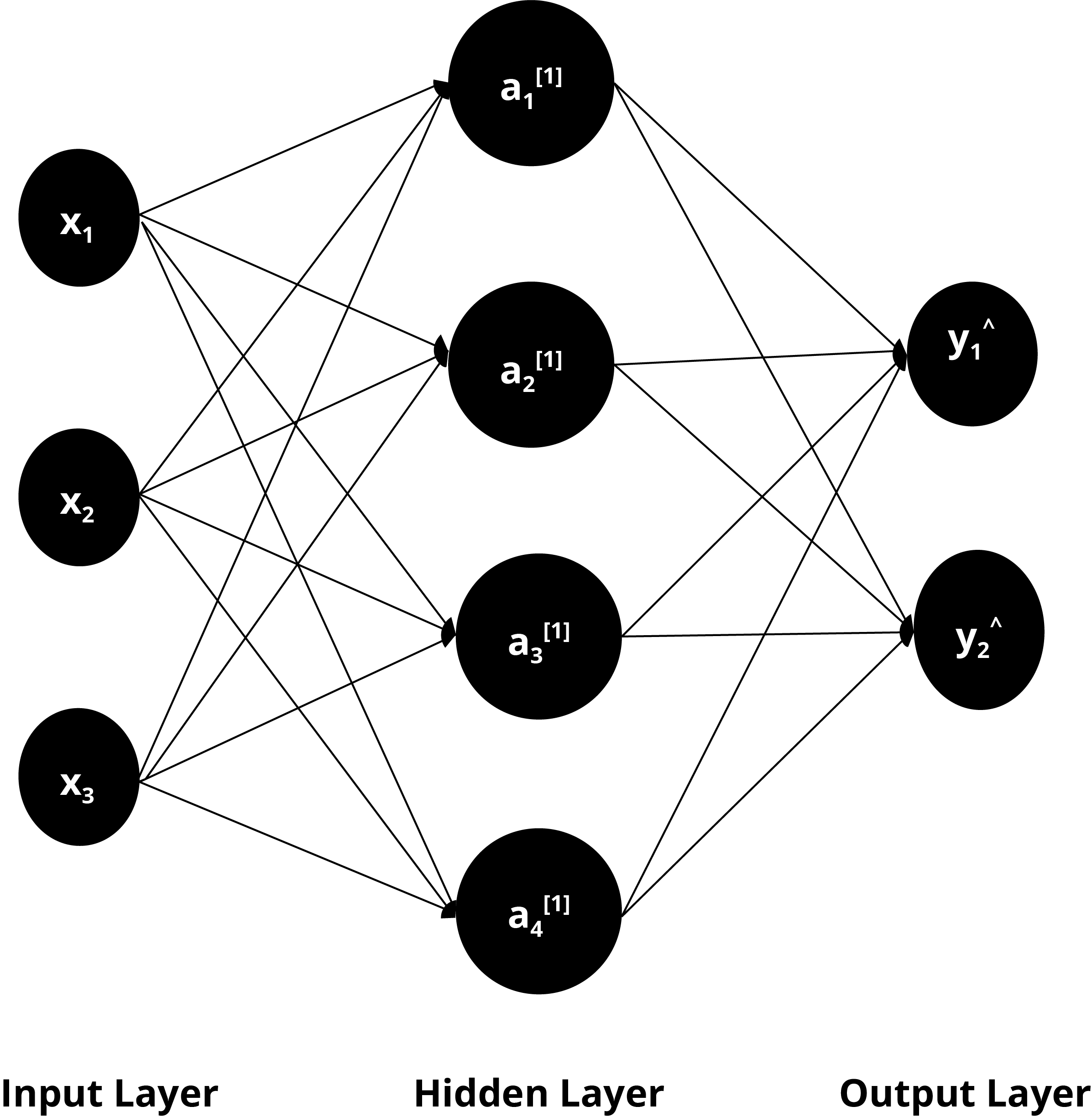

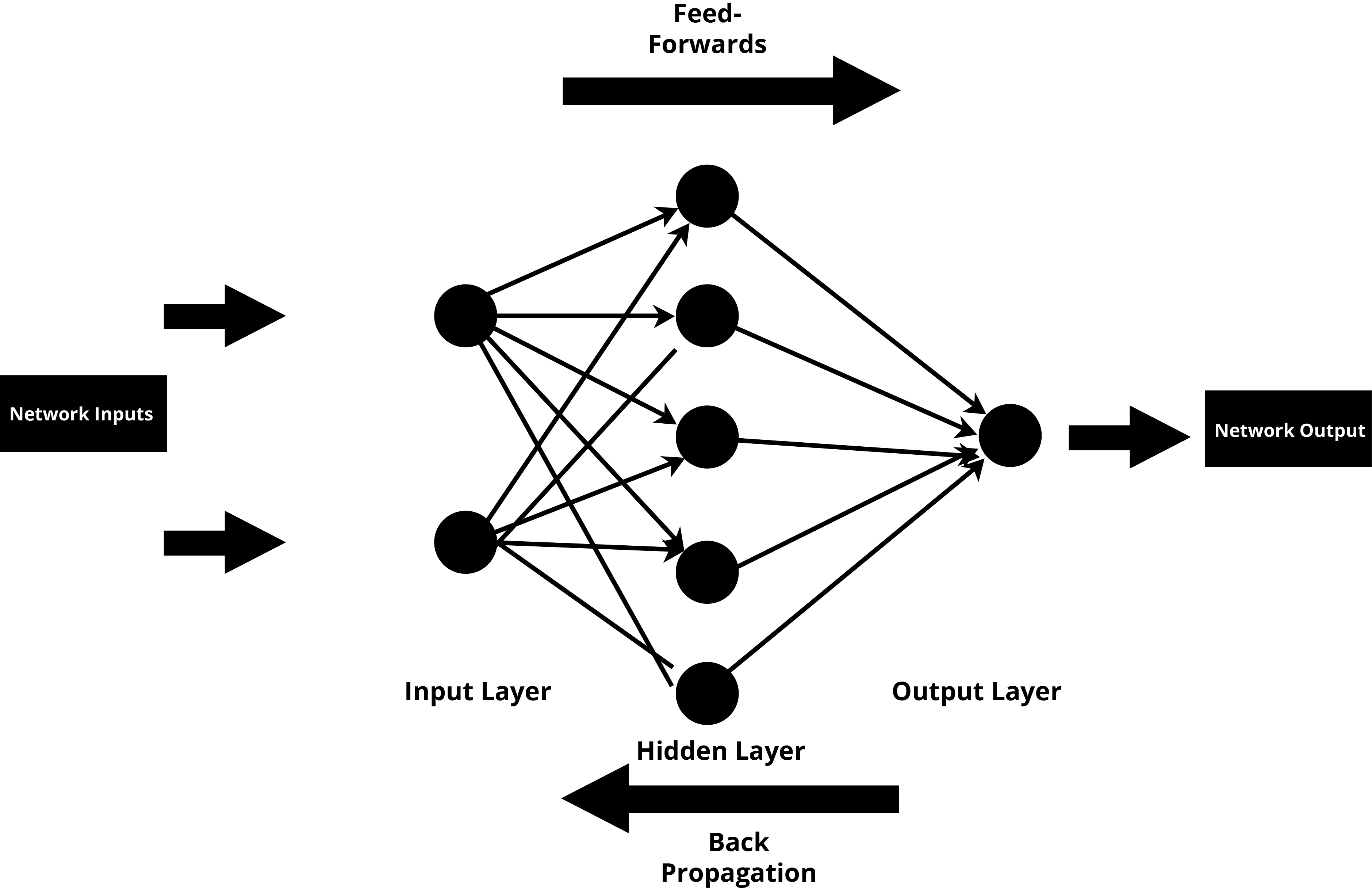

Layers in Deep Neural Network

Deep neural networks are composed of layers of nodes where the inputs are transformed through some activation function and passed to the output layer. The first layer is called the input layer. The last layer is called the output layer, and all the layers in between are called the hidden layers. The more hidden layers we have, the ‘deeper’ a neural network is said to be.

Increasing the number of hidden layers depends on the complexity of the problem. It may increase the accuracy of our results, but raising them beyond a sufficient number might result in the overfitting of the model.

Calculating the threshold of acceptance of results

Building a deep learning model means that our ultimate goal is to make our results useful for whatever problem we are trying to solve. Focusing on the outcome is a direct measure of our deep learning model’s performance. But how do we determine the correctness of our results? We set up a threshold for this purpose. A threshold function acts as a binary classifier in our neural network. The results above the threshold are assigned the value of 1 (accepted). And the results below the threshold are assigned the value of 0 (rejected).

1. Is there an ideal number

Based on the threshold, the samples choose one of the classes. Based on our model’s performance in terms of sensitivity (occurence of a true positive result i.e. no or low false positives) and specificity (occurence of a true negative result i.e. no or low false positives), we can fine-tune our threshold to accommodate the samples. So the inputs and their weights can be manipulated to reach the desired outcome by thresholding. It cannot be an ideal number like 0.5 between 0 and 1 because the threshold is problem-dependent.

For example, in our hypothetical grocery store example, we would like to email a 15% off coupon to users who abandon their shopping carts. We would build a classifier that predicts users who will not proceed to checkout. This is because we should not plan to display coupons to regular customers who are going to proceed through to the checkout anyway. So, we may say that the users with an abandonment score above 0.8 should get the coupon.

2. Determining the threshold

The nature of the deep learning model is that you need to make tweaks to the nodes and layers and then re-evaluate your model.

You start by initializing all the weights to random values (including the threshold of each neuron). We then train our model by feeding our inputs and compute the error by taking the difference between the supposed result and our network’s output. This process is called the cost function. Ideally, we want our cost function to be zero.

The challenge is the same: we need to figure out the weights. For this purpose, we use optimization techniques like backpropagation that adjusts the weights a little in each iteration, including the threshold that gives the right output from the given inputs.

There are two critical components to the Deep Learning Model

A. Cells and B. Layers

Cells

We can consider a cell in Deep Learning as the equivalent of a neuron in the human brain. Much like a neuron that transmits information to other neurons in the human brain, a cell on Deep Learning sends information to other cells to generate the best overall outcome. Cells can transmit information forward or backward, can be connected to cells in separate layers, can hold values and can have different weights in terms of the criticalness of the information they supply.

A basic neural network cell

This type of cell is the most fundamental type of neural network cell. It is used in feed-forward architecture, a network in which information moves in the forward direction only. The cell is connected to other neurons in the network through weights, and each connection carries its weight.

The weights are initialized as random numbers at the start, and they can be positive, negative, big, small, or even zero. The incoming inputs that become the value of these cells are multiplied with weights and added together. There is one extra value called bias, which has its connection weight. This bias value ensures that even if all the inputs are zero, there is still some activation of the neuron.

Layers:

A layer is a group of neurons that are connected to neurons in other layers, but not to neurons within the same group. Each layer can focus on a specific part of the overall task.

A visual deposition of layers in a Neural Network

In basic supervised models, as a user, we can see the input layer comprising the input variables and the output layers which provide the predicted value. But when the algorithm moves into the realm of AI, it is not possible to know how each element may be impacting the end output.

Potential use cases for an Enterprises

Deep learning finally made speech recognition accurate enough to make Amazon’s Alexa and Apple’s Siri more conversational. Rohit Prasad, vice president and head scientist of Alexa, says that the ultimate goal for Alexa is long-term conversation capabilities.

The challenge in NLP has always been the interpretation of the human language. The deep learning approach in NLP (Natural Language Processing) is being widely used for Predictive Analysis in these speech assistants to provide an appropriate response to humans.

Usually, modelling the data in AI requires training the algorithm with labelled data and then exposing it to unseen data sets for prediction. Deep learning takes it one step ahead by directly working on the audio or speech without any initial training. This capability of deep learning is known as “feature engineering”. However, the DL algorithm may take several tries to make this process more accurate.

In our grocery store example, deep learning is a significant focus on search and personalization. Based on the contents of the user’s fridge (if provided by the user) and their previous purchase pattern, we can create a personal shopping experience for the users by suggesting them a shopping list.

We can use deep learning to have conversational agents that can answer phone calls about online grocery deliveries. These neural agents are no different than chatbots.

Google also provides a virtual agent service to customers built entirely on its own AI infrastructure using speech recognition, speech synthesis, and natural language processing.

Social media platforms are also leveraging the power of deep learning. Take the example of Pinterest. It uses a visual search tool to zoom in on specific objects in the image and recommend Related Pins. Like the other industry giants Facebook, Google, IBM, and Pinterest also has to deal with a lot of data to train its artificial neural networks and optimize its services.

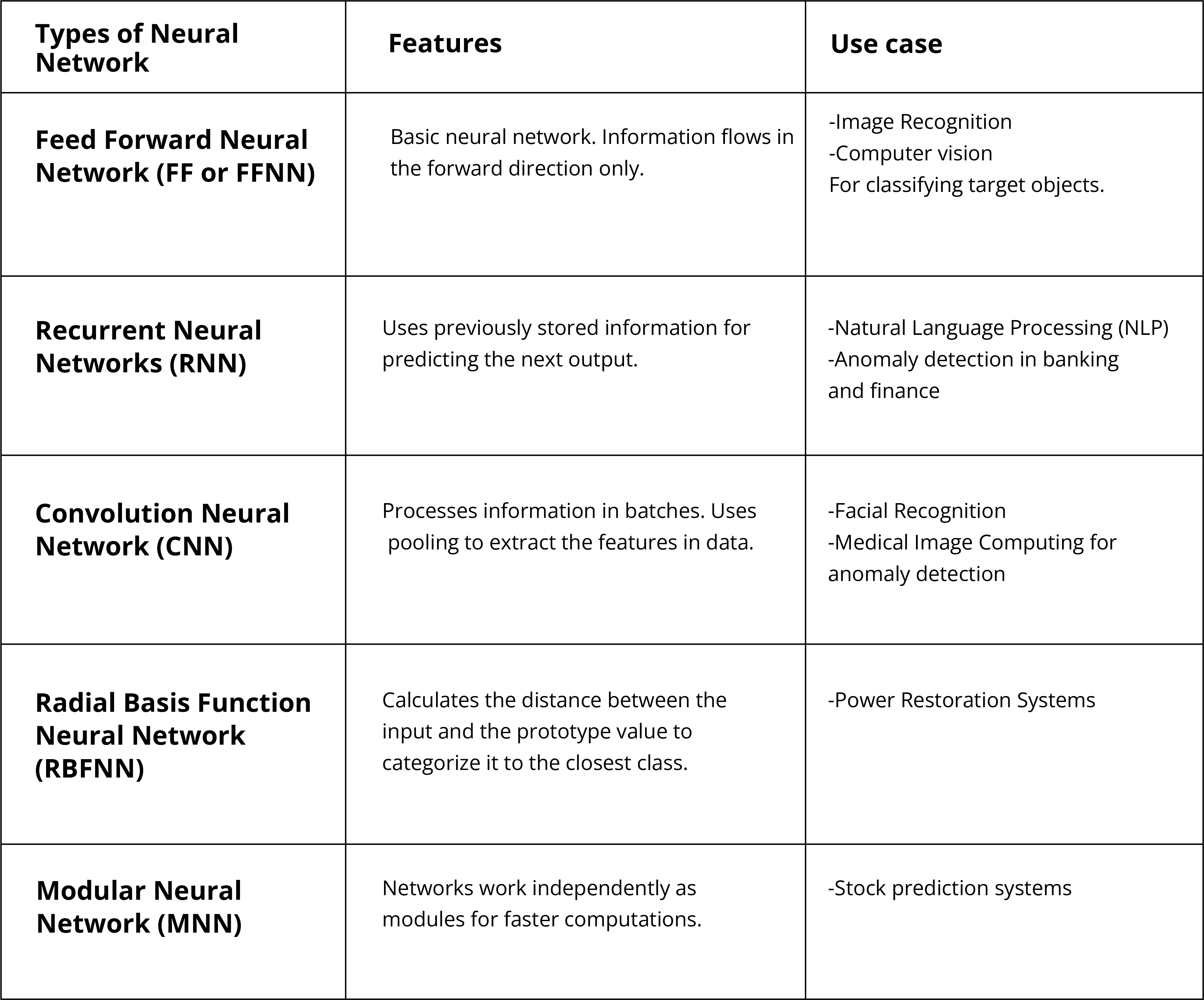

Different Types of Neural Networks

Here we will discuss different types of neural networks along with their applications. They each have their unique strengths and work on different principles to determine the outcome.

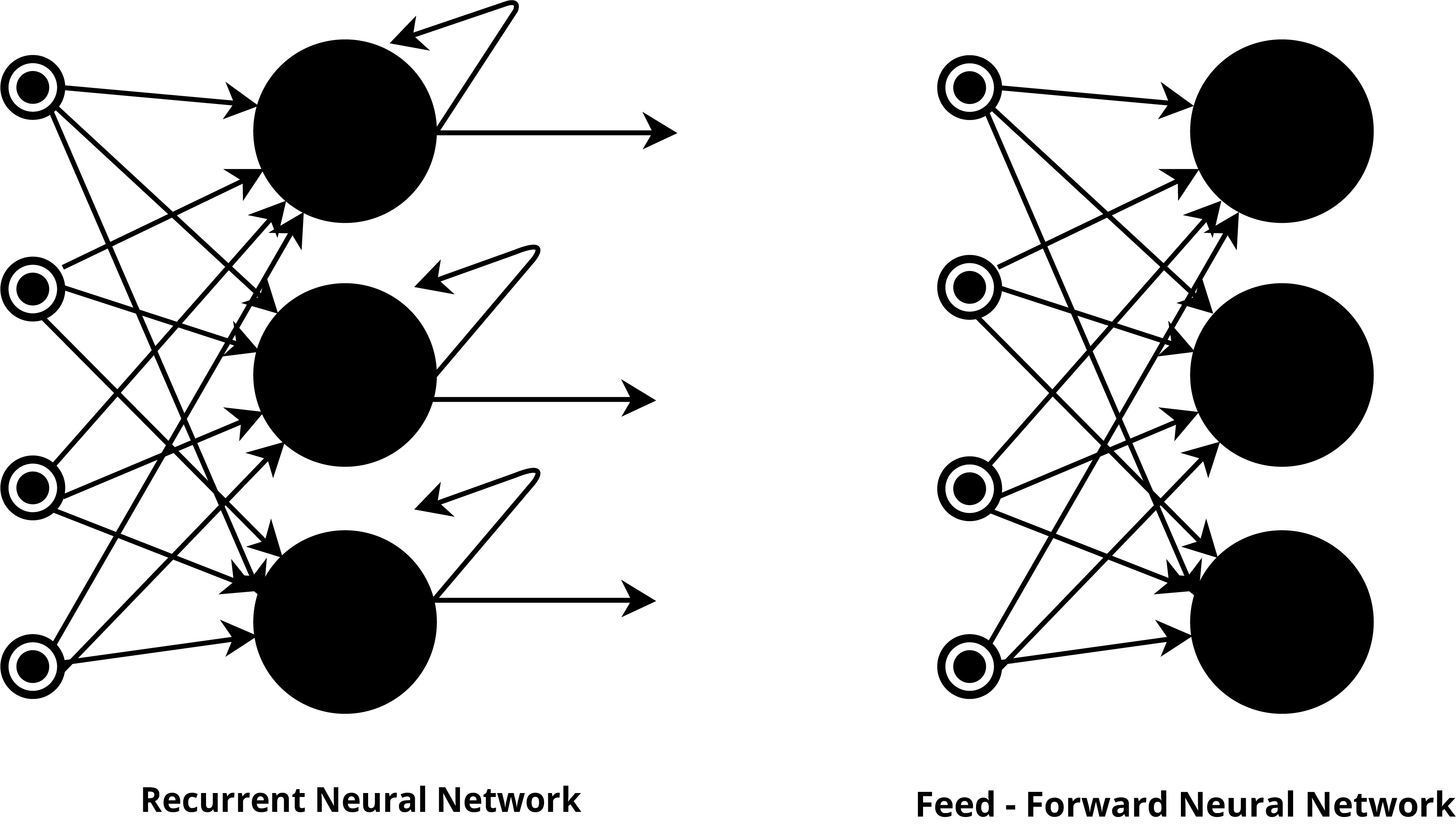

Feed Forward Neural Network (FF or FFNN)

This type of neural network was the first type of artificial network created for use in the machine learning framework. These networks are called “feed-forward” because the information flows in one direction only from the input layer, to the hidden layers, and finally to the output layer. Its working principle is what we have discussed previously as it relates to a simple neural network. The input value is multiplied by the weights, and then this is fed to the next layer as output. It uses some classifying activation function here to decide which values to pass through. So a Sigmoid Function or ReLU is generally used here.

How does this neural network learn?

It learns through a backpropagation algorithm. The output that this network generates is compared with the actual output. The weights are then updated to reduce the error gradually. These neural networks are less complicated than the other types of neural networks and faster because of one-way propagation.

Potential Use case

Feed-forward neural networks are used in classification algorithms like in image recognition and computer vision, where the target classes need to be classified.

Recurrent Neural Networks (RNN)

Recurrent neural networks are the class of networks in which the output of a layer is fed back to it as the input. It is done to help predict the outcome of that layer. The neuron cells in this network act as memory cells, storing the information from the previous time step for future use. It is a combination of a feed-forward network and recurrent neural network in the sense that the first layer works like feed-forward architecture, and the subsequent layers that follow have recurrent neural network architecture.

Potential Use case

Recurrent neural networks came into existence when we needed to predict the next word in a sequence. So retaining the information was key here. Natural Language Processing (NLP) and anomaly detection use this network. The RNN algorithm is created, which, in return, creates a custom template report for the customer using their relevant information.

Similarly, in fraudulent activities on the internet, automated algorithms look for a discernible pattern. So the RNN notices suspicious behaviour since it has already explored the data and ‘knows’ the particular turn of events.

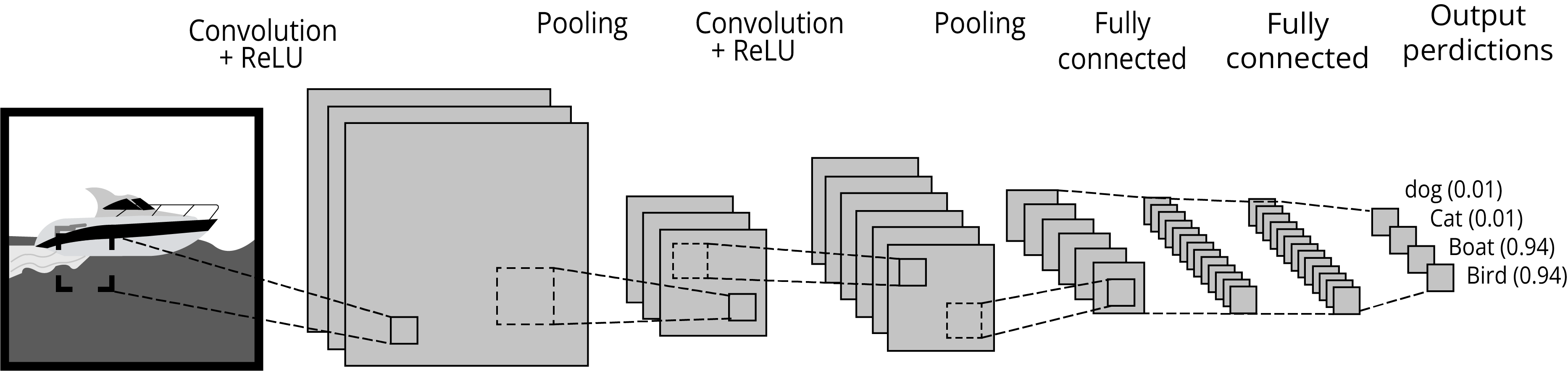

Convolution Neural Network (CNN)

A Visual Depiction of a Convolution Neural Network (CNN)

Convolutional Neural Network is commonly applied in image processing to analyze visual imagery. The first layer acts as a convolutional layer and uses a convolution operation on the input. This operation multiplies the input data with the set of weights called the filter.

The CNN processes information in batches like a filter. It understands one part of the image before moving on to the next until it completes the full image processing. In convolutional layers, the neuron cells are not fully connected to every other cell in the network. These layers use pooling cells, which are used to extract the relevant information from the inputs.

Potential Use case

Companies use CNN in simple applications like facial recognition. The CNN algorithm identifies every face in the picture and then identifies the unique features of the face. By comparing this data with the stored data in the database, it matches the face with a name. Social media uses this face recognition feature to tag your friends in photographs.

The healthcare industry also uses CNN for medical image computing. It detects anomalies in the X-ray and MRI images more accurately than the human eye. The algorithm has access to the Public Health Records, where it can see the differences between the images for predictive analysis.

Radial Basis Function Neural Network (RBFNN)

This type of neural network uses a radial basis as an activation function. This function measures the distance of a point relevant to the center. Each neuron stores a value as a prototype when it is classifying on the training data. When the input is received, the neuron cells calculate the Euclidean distance between the input and the prototype value and decides the category to which it belongs.

Potential Use case

RBFNN has found its applications in power restoration systems. As the power systems have become more prominent and sophisticated, power outage issues have increased considerably. This algorithm ensures the restoration of power in the shortest possible time frame.

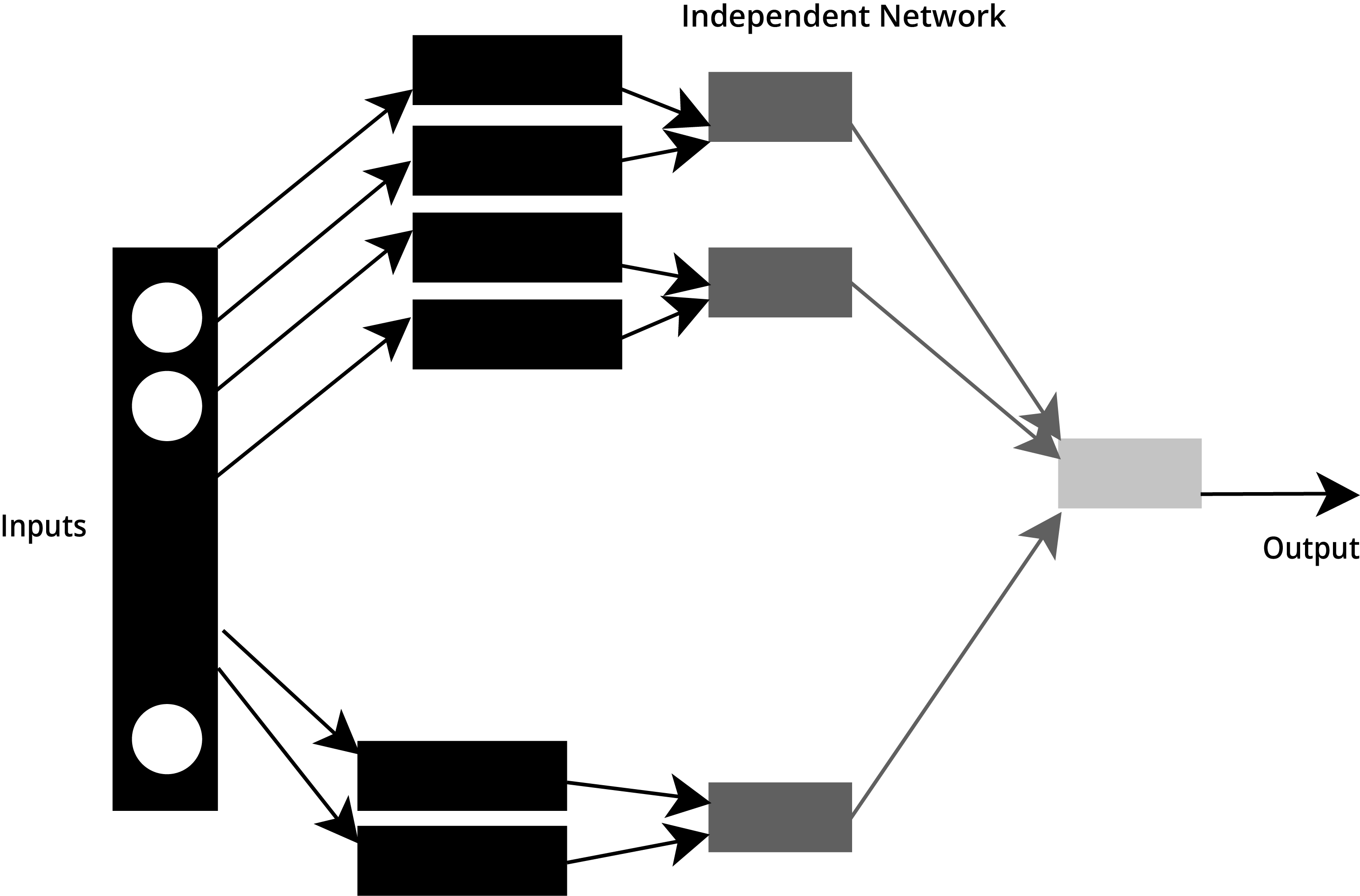

Modular Neural Network (MNN)

A visual representation of a Modular Neural Network

A modular neural network consists of many networks that work independently and perform sub-tasks. They do not interact with each other, and each module is responsible for its action. As a result, modular networks work relevantly faster for complex computational processes.

Potential Use case

MNN models are really popular for certain applications like stock price prediction systems. The system is based on various independent modules that work on their input and process the information. The final processing unit gathers the results of all the modules to project output.

https://ieeexplore.ieee.org/document/5726498

Some of the Deep learning use cases examples

Robotics

You can easily find that the recent development in robotics is due to deep learning and AI. In the near future we will be able to see robotics being used in things like driverless cars, sensing and responding to the environment, smart-home and many more such applications. All these are possible due to deep learning and AI adaptation.

Agriculture

Deep learning is helping farmers in being able to distinguish between crop plants and weeds, signs of infestations and plant disease etc. This information helps the machines decide what to spray and where in terms of fertilizers. This saves lots of time for the farmer and gets better results. Deep Learning has a very high scope of implementation in agriculture.

Healthcare

The Convolutional Neural Network is used for the classification of images. We know the significance of classification when it comes to medical images. For example, a dermatologist can use deep learning in classifying skin cancer or an earlier detection of breast cancer. Some organisations have received FDA approvals for deep learning algorithms for diagnostic analysis. The faster and more accurate classification of images can greatly improve accuracy versus having to rely on a human examination of x-rays and MRI results.